Introduction

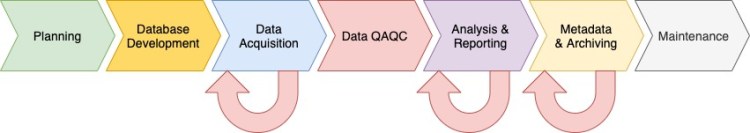

In the previous post I provided an overview of the analysis and report stage of the data pipeline (Figure 1). In this post I’ll provide an overview of the metadata and archiving stage. At this stage in the pipeline you’re project is mostly completed, and your ready to prepare your data for long-term storage. Ideally the data management plan that you prepared during the planning phase includes a section on data archiving and metadata, in which case you can use that to guide you through the metadata and archiving process.

The basic steps for this phase are described below, followed by some ideas to help streamline metadata development and archiving.

What is Metadata and Archiving?

Data archiving is the process of preparing data for long-term storage, and includes developing metadata. Metadata is data about a dataset, and it’s as important as the dataset itself. It provides a description of a dataset, including the intended purpose of the data, and descriptions of the data tables and columns. Basic metadata also typically includes the name and contact information of the data originator and maintainer.

Metadata is data about a dataset, and it’s as important as the dataset itself.

Although one of the last steps in the data pipeline, elements of metadata and archiving occur in all steps in the pipeline, beginning in the planning phase by including funding in your budget, and a section in your data management plan for data archiving and metadata development. In the database development phase, metadata can be included in the database itself to help streamline metadata preparation, and database views can be used to prepare data for archiving (see below, Ideas for Streamlining Metadata & Archiving). In the data acquisition phase, the protocols are an integral part of the metadata, and all raw field data and files will become part of of the final data archive, and thus require proper organization and management. Data QA/QC is an integral part of the archiving process as indicated by the red arrow in Figure 1 circling back into the Metadata & Archiving stage. Specifically, all metadata files should be reviewed for consistency and accuracy. In the analysis and reporting stage, the final data analysis files (ideally annotated using a markup language) should be included with the data archive.

Basic Metadata & Archiving Steps

The basics steps are listed below, after which I’ll provide a brief description of each:

- Identify and compile files for data archive

- Identify database tables and columns for archiving

- Prepare metadata

- Export data, or otherwise process data for archiving

Compile Files: In this step you’ll make a list of all the files to include in the data archive, and compile them in a central location. Files for archiving may include protocol documents, photographs, original and scanned copies of paper data forms, text backup files (digital data capture only), Geographic Positioning System (GPS) files, and data analysis markup files.

Identify Database Tables and Columns: In this step you’ll make a list of the database tables, and columns within each, that will be archived. Also in this step you should list all data dictionaries that should be included with the data archive.

Prepare Metadata: In this step you’ll prepare the metadata for archiving. You might consider starting your metadata with a top level README file that describes how the metadata is organized and that includes contact information for the data originator and maintainer. For files, metadata may include “README” files in simple text format with a description of each file, and for data tables and columns this may include a spreadsheet with table and column descriptions. More sophisticated workflows may include separate tables in the database designed specifically to store table and column descriptions. Other options for preparing metadata include online metadata preparation software such as mdEditor.

Export Data for Archiving: In this step you’ll export the data from the database into a non-proprietary file format, such as a comma-separated values (CSV) or a SQL dump file. For long-term archiving a non-proprietary file format is the best option to ensure data access well into the future. Other options at this stage include uploading your data to a public data repository that has ongoing support and maintenance

Ideas for Streamlining Metadata & Archiving

Following the metadata and archiving steps described above is essential for properly archiving your data, and will make sharing your data with others or returning to those data at a later date much more manageable. However, preparing metadata and archiving data can be somewhat time consuming. Here are some ideas to help streamline this process.

- Database Design: Incorporate tables and views that contain metadata about your database tables and columns into your database design, which can help streamline data archiving. You could also include a boolean (i.e., True/False) column in your metadata table that specifies whether a column will be included in the data archive. In some database software, like PostgreSQL, you can include “comments” on database objects like tables, columns, and views in which you can add a description (i.e., metadata). The comments can later be queried from the database itself to provide a list of table and column descriptions.

- Database Views: Views are essentially saved queries. If you find yourself preparing data for archiving from the same database year after year, then views can be handy. You can use database views to prepare the data for archiving, for instance, by selecting only those columns you want to deliver, and replacing codes with titles from data dictionaries in columns that contain categorical data.

- Photo Management: Manage your photos using a database. This will allow you to associate data attributes with each photo file in a tabular format and query photo information in your database. This will make it easier to archive the photos, while also ensuring that the photos have relevant information associated with them. Assuming your photos are associated with an observation, for instance a field plot, in PostgreSQL you can create your own basic database table for photo data using the following SQL code:

CREATE TABLE public.photo_data

(

serial serial PRIMARY KEY,

plot_id character varying(32),

photo_observer_code character varying(32),

photo_filename text,

photo_filepath text,

camera_name character varying(32),

camera_gps_latitude numeric(28,16),

camera_gps_longitude numeric(28,16),

camera_gps_elevation_m numeric(28,16),

camera_gps_h_datum character varying(32),

camera_gps_ts timestamp with time zone,

camera_gps_accuracy_m integer,

CONSTRAINT photo_photo_filename_camera_code UNIQUE (photo_filename, camera_name)

);

COMMENT ON COLUMN public.photo_data.serial

IS 'Autopopulated unique serial identifier.';

COMMENT ON COLUMN public.photo_data.plot_id

IS 'Unique code identifying the field plot.';

COMMENT ON COLUMN public.photo_data.photo_filename

IS 'File name of the photograph, including the file extension (e.g., photo01.JPG).';

COMMENT ON COLUMN public.photo_data.photo_filepath

IS 'Path to the photograph on the file system.';

COMMENT ON COLUMN public.photo_data.camera_name

IS 'Name of the camera used to record plot photos.';

COMMENT ON COLUMN public.photo_data.camera_gps_latitude

IS 'Latitude of the photo, as recorded by a GPS-enabled camera. Units are decimal degrees.';

COMMENT ON COLUMN public.photo_data.camera_gps_longitude

IS 'Longitude of the photo, as recorded by a GPS-enabled camera. Units are decimal degrees.';

COMMENT ON COLUMN public.photo_data.camera_gps_elevation_m

IS 'Elevation of the photo, as recorded by a GPS-enabled camera. Units are meters.';

COMMENT ON COLUMN public.photo_data.camera_gps_h_datum

IS 'The horizontal datum of the latitude (y) and longitude (x) coordinates of the photo as recorded by a GPS-enabled camera.';

COMMENT ON COLUMN public.photo_data.camera_gps_ts

IS 'The date and time the GPS location for the photo was recorded in the field by a GPS-enabled camera. Reported in local time with a UTC offset.';

COMMENT ON COLUMN public.photo_data.camera_gps_accuracy_m

IS 'Reported accuracy of the GPS location of the photo, as reported by a GPS-enabled camera. Units are meters.';Database Maintenance

In a nutshell database maintenance is not really necessary if your project is a one time project for which the database will never be used again. For such a project, once the data is archived there is nothing else to do. However, for long-term projects for which the database will be repeatedly used, there will be periodic database maintenance required. This may include software and server updates, server fees (if using a cloud server), and updates to domain lists, to name a few. The key here is planning. Be certain to include funds in your budget for maintenance, and include maintenance in your data management plan and project schedule.

Next Time on Elfinwood Data Science Blog

In this post I provided an overview of stage 6 in the data pipeline: Metadata & Archiving, and briefly covered database maintenance. In the next post I’ll get into the details of preparing a data management plan. If you like this post then please consider subscribing to this blog (see below) or following me on social media.

Follow My Blog

Get new content delivered directly to your inbox.

Copyright © 2020, Aaron Wells

3 thoughts on “The Data Pipeline: Metadata & Archiving”