It only takes one bad apple to spoil the bunch.

Introduction

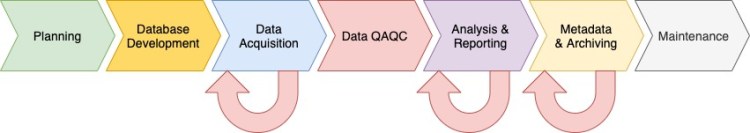

In the previous post I provided an overview of the data acquisition stage of the data pipeline (Figure 1). In this post I’ll provide an overview of the data Quality Assurance and Control (QAQC) stage. Data quality assurance and control is the process of checking data against consistent standards, and ensuring completeness and accuracy. The data QAQC process often isn’t entirely linear, that is, during QAQC you may notice an error in the data that may require you to go back a few steps to evaluate the data differently. Data QAQC also isn’t a finite process, rather its continual, and begins in the previous stage, and cascades into the next 2 states of the data pipeline: Analysis & Reporting and Metadata & Archiving.

Data QAQC

In my posts “The Data Pipeline: Planning” and “The Data Pipeline: Database Development“, I described the importance of proper data management planning and database design and development. In my previous post I described how data QAQC begins during the data acquisition stage. If you follow my advice from these previous posts then you’ll be ahead of game when you get the data QAQC stage. This is because you’ll have the budget to perform data QAQC, a database designed to facilitate data QAQC, and you’ll have prevented many errors, and detected and corrected errors and inconsistencies that did occur early on. Below are 4 important elements of data QAQC that we’ll consider for the remainder of this post:

- Data Provenance

- Database Controls

- Editor Tracking

- Missing Data

Data Provenance

Data provenance refers to the origin of a data element and its changes over time, including the processes that caused and materialized the changes (Viglas 2013). The first step in data provenance begins during the data acquisition stage with daily data backups.

“Data provenance refers to the origin of a data element and its changes over time, including the processes that caused and materialized the changes.”

Viglas (2013)

Back in the office an important step in maintaining data provenance is keeping a copy of the original field data with only primary key changes made as needed to get the data to conform to the database constraints. A copy of the original field data is made, and all future QAQC and resulting changes to the data occur on the copy.

Database Controls

Databases can include a number of built in controls to automatically control for data errors and facilitate data QAQC. At the most basic these include primary and foreign key relationships to establish referential integrity within your data, and reference tables that contain allowable values for specific data fields. Database systems with more sophisticated controls may include built in data validation checks, for instance using a trigger function to check that all values in a numeric data field conform to the allowable upper and lower bounds of that field. Database views, or saved queries, are another advanced database control. Views can be written that perform logical data checks to flag errors and inconsistencies, which can then be easily identified and corrected. Additionally, database tables should include columns for capturing data on the QAQC process (e.g. office notes field, data quality ranking fields) and for tracking which rows data QAQC has been completed on, when it was completed, and by whom.

Editor Tracking

Editor tracking, the process of maintaining a log of changes to the data, including who made the change and when, is another important component of data QAQC. The purpose of editor tracking is to keep a record of the data through the QAQC process such that someone could look back and track the changes to each data element. In its simplest form this may include simple text notes with the reviewers initials and a description of what was changed for a specific data element and why. Advanced editor tracking applications may include custom scripts designed to automatically track every change in the database, including the value that was changed, the user initials, and a timestamp. Editor tracking is related to data provenance in that it is part of the history of the data from its origins in the field; through QAQC, analysis and reporting; and through to data archiving.

Missing Data

Lastly, you’ll need to think though how to address missing data in your database. Initially missing data are represented by null values. However, null values are not very informative as they do not provide any information about why the data is missing. For instance, null values may represent data that should have been recorded but was accidentally missed, or they may represent something more meaningful. For instance, a common data attribute recorded in environmental field data is slope aspect, i.e., the compass direction a hill or mountain slope is facing. On a flat landscape position (slope = 0) there is no aspect. In the case of zero slope the fact that there is not an aspect value is different from forgetting to record a value for slope aspect. Hence, these 2 situations should be handled differently in the database. I’ll cover missing data more thoroughly in a future blog post.

Next Time on Elfinwood Data Science Blog

In this post I provided an overview of stage 4 in the data pipeline: Data QAQC. In the next post I’ll provide an overview of analysis and reporting, stage 5 of the data pipeline. If you like this post then please consider subscribing to this blog (see below) or following me on social media.

Literature Cited

Viglas, S.D., 2013. Data Provenance and Trust. Data Science Journal, 12, pp.GRDI58–GRDI64. DOI: http://doi.org/10.2481/dsj.GRDI-010

Follow My Blog

Get new content delivered directly to your inbox.

Copyright © 2020, Aaron Wells

One thought on “The Data Pipeline: Data QAQC”